ACID Transaction Durability At Scale

K.S. Bhaskar

We have heard repeatedly from database users that for truly ACID transactions, no database matches the scalability of YottaDB, or its upstream GT.M. Our recent blog post blog post ACID Transactions Are Hard At Scale … Part 1 discusses why Consistency and Isolation at scale are hard, and ACID Transactions Are Hard At Scale … Part 2 discusses how YottaDB meets that need. This blog post discusses why ACID transaction Durability is hard at scale, and how YottaDB achieves it.

What is Durability?

We summarize Durability thus:

Durability means that once the transaction is completed, it is permanently recorded in the database and cannot be erased even if the computer crashes.

Since YottaDB, or any other database, is software, it relies on the correct operation of the underlying hardware. Without a guarantee of correct operation of the underlying hardware,1 correct operation of software cannot be guaranteed. Since the perfect hardware that never fails has not yet been invented, a more practical requirement is that hardware failure does not damage stored information, i.e., it is OK for computers to crash, but when a computer crashes, data that has been committed to nonvolatile storage survives intact. Database Durability starts with the premise that while hardware failure can make data unavailable, the data is intact and recoverable. (In a subsequent blog post, we will discuss application availability and Durability when this premise is violated.)

Why is Durability Hard to Implement at Scale?

As committing a transaction simply changes blocks in the database file in the filesystem, committing a transaction is theoretically just a matter of writing those blocks. But there are gaps between theory and practice, especially for performance at scale.

- Since the database file is a random access file, updating the file involves writing blocks at multiple offsets in the file. While this may be a lesser performance concern with solid-state storage which, unlike spinning disks, does not have to move heads, random writes on solid state storage are still slower than sequential writes.

- Since a computer can crash in the middle of writing multiple blocks, when a computer is rebooted after a crash, you need to know whether or not all the blocks of a transaction were written. If all of them were written and can be read back, the transaction is committed, but if only some of them were written, then the transaction is not committed and the blocks that were written need to have their prior contents restored.2

- Even when all the blocks are written to the filesystem by the database, owing to buffering by Linux, the data may not immediately be committed to non-volatile storage.

How Does YottaDB Implement Durability at Scale?

All databases ultimately implement Durability through the file system, and there are only a limited number of ways to do this, e.g., by calling fsync(). While we cannot tell you how the myriad other databases use these filesystem APIs, we can tell you how YottaDB implements Durability at scale for ACID transactions.

YottaDB has multiple ways to access database files. This blog post describes the most common Buffered Global (BG) access method with before-image journaling. This technique writes update information to a journal file.

Each database file has a cache of database blocks in a shared memory segment. This cache sits in front of the Linux file buffer cache because it is much faster for processes to read from and write to shared memory than the filesystem. Each database file also has an active journal file that is written to sequentially, i.e., by appending to it.

An epoch is a periodic checkpoint such that if the system crashes immediately after an epoch, the database file has structural integrity and is up to date, i.e., it does not need repair. At an epoch, all dirty (modified) global buffers are written to the filesystem and dirty buffers in the filesystem are written to nonvolatile storage with an fsync(). By default, epochs occur every 300 seconds, but can occur more frequently if YottaDB encounters an earlier opportunity.

There are two types of data records in a journal file: before-image records and update records. The first time that a block in a database file is modified after an epoch, a before-image record of the unmodified block is written to the journal file. Setting or deleting nodes generates update records, with each update record preceded in the journal file by before-image records of the block(s) it is modifying. If multiple updates within an epoch modify the same block, only the first update of the series causes a before-image record of the block to be written to the journal file.

Committing a transaction conceptually consists of two steps:

- Writing the journal records to the journal file, and ensuring that they are committed to nonvolatile storage with an fsync(), ensuring Durability.

- Updating the global buffers for the transaction’s updates. YottaDB processes cooperate to manage the database, and the blocks in global buffers eventually get written to the filesystem, at or before the next epoch.

While Consistency and Isolation are relevant when an application is running, Durability is relevant when recovering from system crashes.

When recovering from a system crash, YottaDB reads the end of the journal file. If it indicates that the system crashed immediately following an epoch, recovery is only a matter of cleaning up some metadata. If the end of the journal file indicates a crash that happened between epochs YottaDB recovers the database, i.e., provides Durability, as follows:

- It finds the end of the last committed transaction from the records in the journal file. Any transaction update records after that are an incomplete transaction, i.e., the system crashed before the transaction was committed, and so the transaction did not happen – Atomicity requires that all updates of a transaction be committed.

- Reading the journal file backward from the end, it reads before-image records, and applies those to the database file, a process referred to as recovery or rollback. When it reaches an epoch, the database file has been restored to the state it had at the epoch, i.e., it is structurally sound and up to date as of the epoch.

- Reading the journal file forward from the epoch, it processes and applies the update records of each transaction to the database. This brings the database up to date with the last committed transaction, thus delivering Durability.

Of course, while conceptually simple, there is virtually unlimited complexity in implementing this Durability mechanism so that it scales. Here are just a few examples:

- While committing a transaction conceptually involves just the two steps listed above, the actual commit code involves multiple phases internally; a straightforward implementation might deliver perhaps half the throughput YottaDB actually delivers.

- As bypassing Linux filesystem buffering for journal files on high-end IO subsystems such as NVMe drives and SANs connected by fiber channels can yield higher write performance, the SYNC_IO option instructs YottaDB to bypass the buffering.

- Since even real-time core-banking systems have batch processes (see 100.000 Foot View of Core-Banking Systems), if a batch process can restart after a crash based on database state, such a batch process may require YottaDB to provide just Acidity, Consistency and Isolation. YottaDB provides such applications with an option to declare a transaction as a “BATCH” transaction that can accept relaxed Durability. Of course, if a subsequent transaction requires Durability, database serialization mandates that YottaDB make all prior transactions Durable.

- A production database usually consists of multiple database files, whose epochs typically happen at different times.

- While database updates that are not within ACID transactions do not demand the same guarantee of Durability, they do need to be made Durable, and this Durability typically happens in a fraction of a second.

Over the years, the YottaDB code base has provided transaction Durability to mission critical applications around the world, in banking & finance, healthcare, and more. We have tried to explain here how YottaDB implements that Durability, and why you should trust it to.

Looking Ahead – When Hardware Failure Damages Or Destroys Filesystems

Hardware failures can damage or destroy filesystems. Failures can also make datacenters unavailable (even datacenters of Amazon, Google, and Microsoft, no matter what any marketing hype says). Our blog post to follow, on Replication, will discuss how YottaDB responds to this need.

In Conclusion

If you have questions, or would like to learn more about how YottaDB transaction processing can meet your transaction processing application’s need to scale while providing five nines availability, please contact us.

Footnotes

- Memory and storage have error rates (e.g., see DRAM Errors in the Wild: A Large-Scale Field Study) that are unacceptable for financial transaction processing. Techniques like mirroring and error-correcting RAM reduce the error rates to acceptable levels. Ideally, the error correction technique would be single-error correction, double error detection so that when uncorrected errors occur, they are detected rather than resulting in incorrect software operation

- Some databases use copy-on-write (CoW) to deal with this requirement, but copy-on-write has scalability limitations, e.g., from Copy-On-Write – When to Use It, When to Avoid It, “CoW is an expensive process if done aggressively. If on every single write, we create a copy then in a system that is write-heavy, things could go out of hand very soon.”

Credits

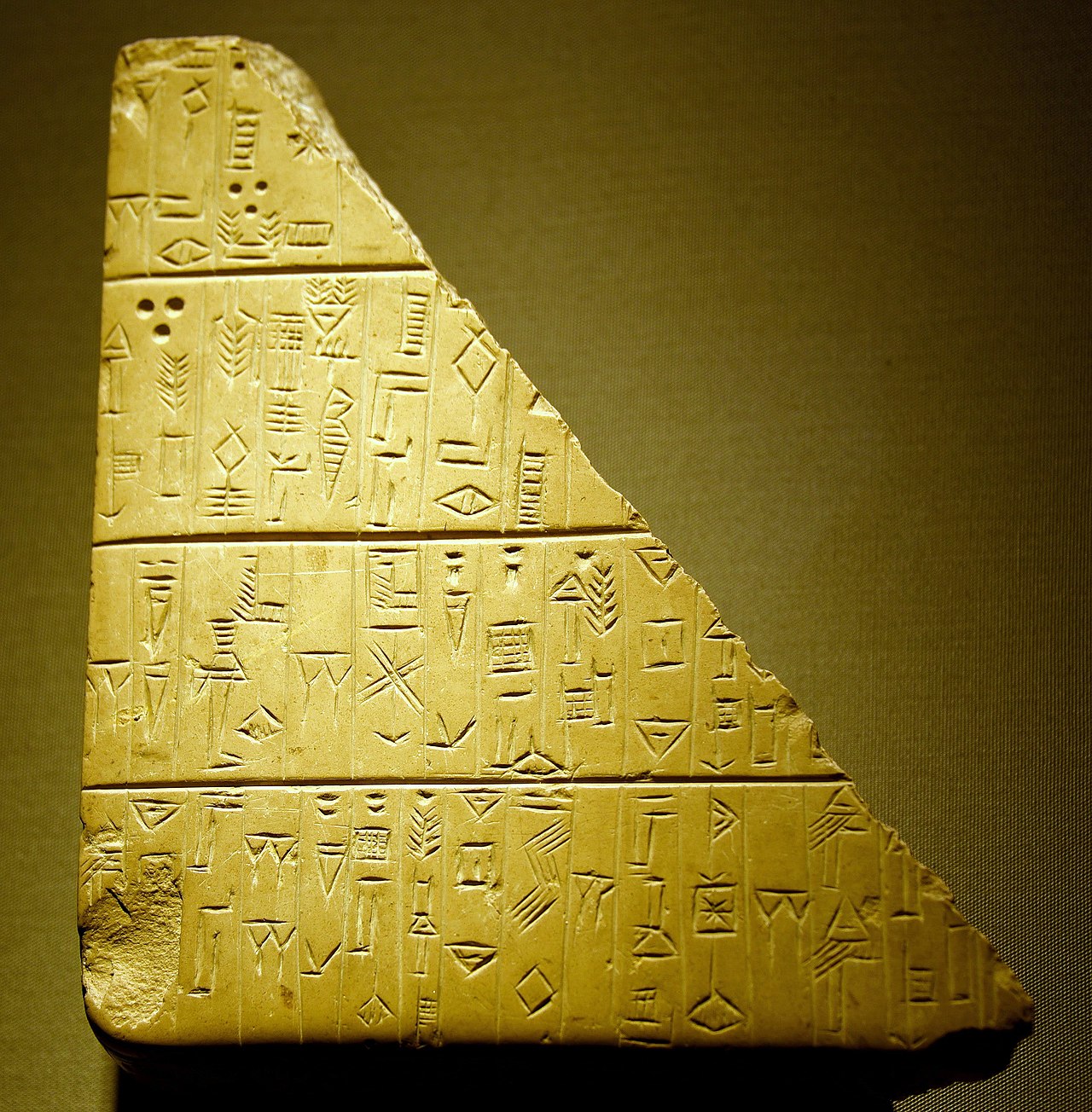

- Photo of Stone tablet with cuneiform inscription by Dr. Osama Shukir Muhammed Amin used under Creative Commons Attribution-Share Alike 4.0 International. It documents a land purchase by a man named Tupsikka. From Dilbat, Iraq. 2400-2200 BCE.

- Photo of Cuneiform clay tablet by user Zinkir used under Creative Commons Attribution-Share Alike 4.0 International. It complains to the merchant Ea-Nasir about delivery of the wrong grade of copper.

Published on February 26, 2026